Overview

This challenge is part of the ReLearn Workshop at CVPR 2026 in Denver, Colorado. ReLearn studies the relationship between human and machine intelligence and how cognitive and psychological insights can guide the future of AI.

Theory of Mind (ToM)—the ability to infer others' mental states—is a cornerstone of human intelligence, making it an ideal testbed for evaluating how well AI systems can learn social reasoning from human behavior. We propose a challenge that leverages multimodal inputs (videos, textual scene descriptions, and dialogues between agents) to infer an agent's mental states, including goals, beliefs, and beliefs about others' goals. This setup introduces the additional complexity of identifying which modality contributes essential cues for reasoning about unobserved mental states. While humans can accurately perform such reasoning, current models remain far from this level of accuracy.

Building on recently released benchmarks, MMToM-QA (ACL'24) and MuMA-ToM (AAAI'25), we will host two tracks in this challenge: 1) reasoning from a single agent's behavior and 2) reasoning from multiple agents' interactions.

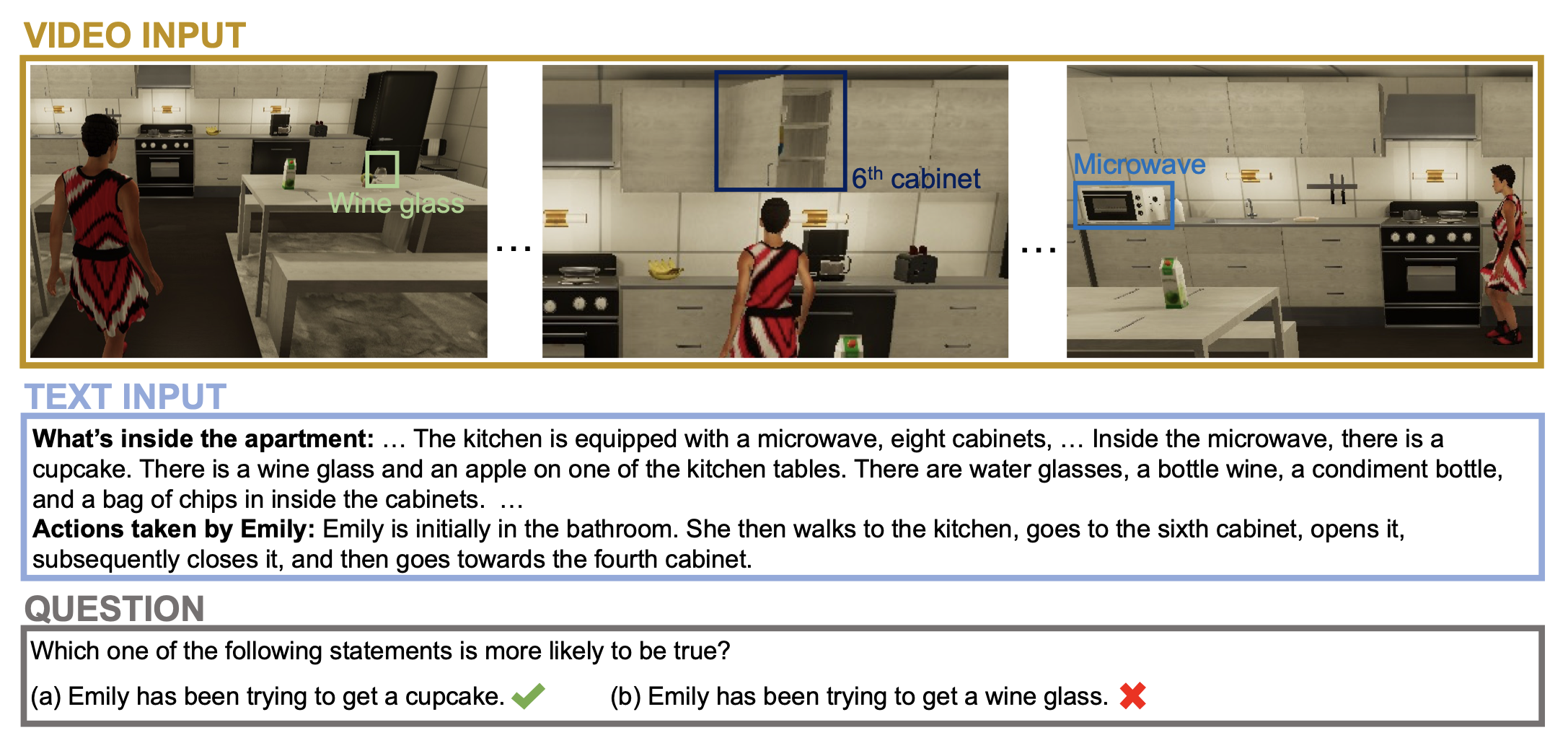

Single-Agent Reasoning

Infer goals from video and textual scene descriptions.

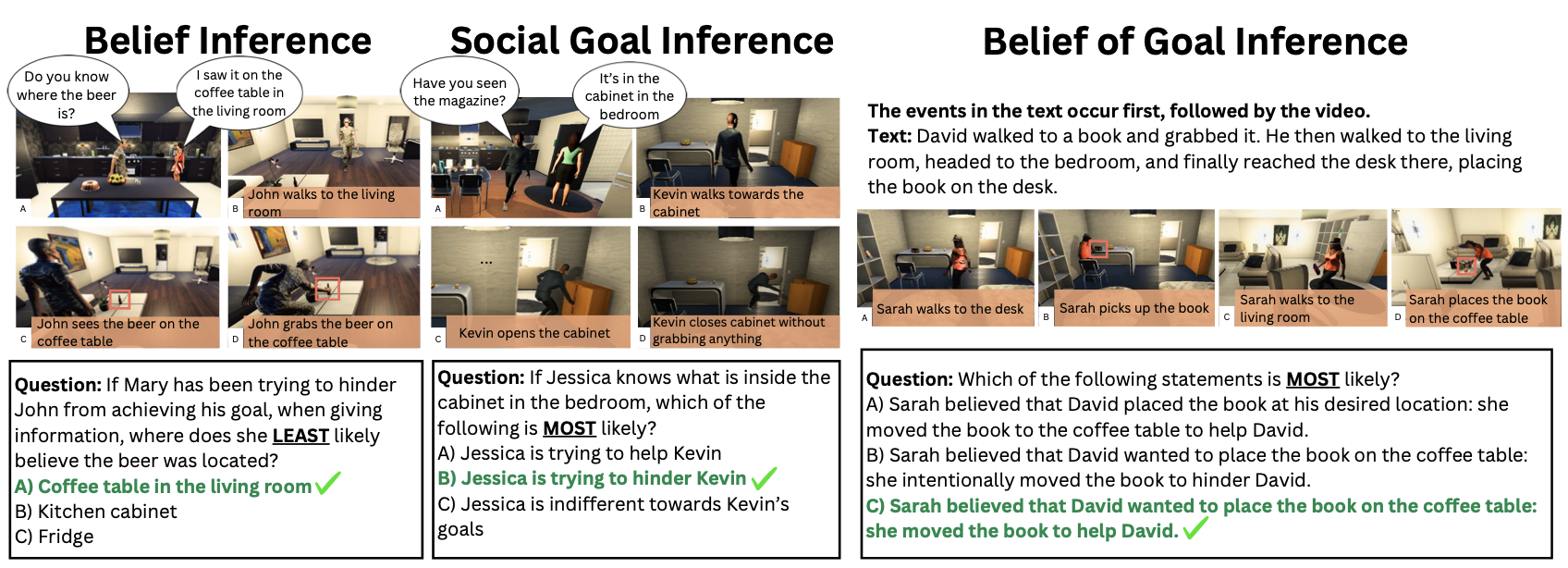

Multi-Agent Reasoning

Infer beliefs and social goals from multiple agents' interactions and dialogue.

Timeline

- Registration & submission opens: February 23, 2026

- Submissions due: May 3, 2026

- Final results & winners announced: May 5, 2026

- Winners submit technical report: May 19, 2026

Awards

The challenge includes two tracks. In each track, the top teams will receive the following awards:

- Champion: $3k Lambda credits + Certificate of Achievement

- 2nd & 3rd places: Certificate of Achievement

For full workshop details, invited speakers, and call for papers, see the ReLearn workshop page.

Ready to compare current results? View the leaderboard.

Participation

Registration

To participate in the challenge, you must register your team by filling out the form below. Registration is a strict requirement. After you register, you will receive a Team ID—use this ID when submitting your results for Track 1 and Track 2.

Track 1 Single-Agent Reasoning

Dataset

This track follows the MMToM-QA benchmark. For model development and training, use the official training set. You will need to generate answers to the questions in MMToM-QA/Benchmark/questions.json and store the results in the following JSON format for evaluation.

Submission format

Submit a single JSON file (.json).

- One JSON array: one object per question.

- Each object:

question_id(integer) andanswer(string). - Answer must be one of the benchmark options, e.g.

"a"or"b".

Example: [ {"question_id": 1, "answer": "a"}, {"question_id": 2, "answer": "b"}, ... ]

Submit your results

Track 2 Multi-Agent Reasoning

Dataset

This track follows the MuMA-ToM benchmark. For model development and training, use the official training set. You will need to generate answers to the questions in MUMA-TOM-BENCHMARK/questions.json and store the results in the following JSON format for evaluation.

Submission format

Submit a single JSON file (.json).

- One JSON array: one object per question.

- Each object:

scenario_id(integer),question_id(integer, within that scenario), andanswer(string). - Answer must be the option letter, e.g.

"A","B", or"C".

Example: [ {"scenario_id": 1, "question_id": 1, "answer": "B"}, ... ]

Submit your results

Leaderboard

Best public results for each registered team.

Track 1

| Rank | Team Name | Release | Belief | Goal | Overall Score |

|---|---|---|---|---|---|

| 1 | Example Team Alpha | 2026-05-01 | 84.00 | 81.00 | 82.50 |

Track 2

| Rank | Team Name | Release | Belief | Belief of Goal | Social Goal | Overall |

|---|---|---|---|---|---|---|

| 1 | Example Team Beta | 2026-05-02 | — | — | — | 79.75 |

Integrity

The test sets for both tracks are also publicly available. You must not hard-code answers to the test set, nor use test-set content (e.g., questions, labels, or answer keys) to directly construct or fine-tune your models in ways that would not be valid in standard research practice.

For development and supervised learning, use the official training sets linked under each track above. That is the appropriate data for training and tuning your models. We trust every participant to follow sound academic practice. If the review process finds evidence of misconduct or rule violations, the organizing committee may take serious action, including disqualification from the challenge.

Help

General help

Dr. Xi Wang : xi.wang@inf.ethz.ch

Dr. Yen-Ling Kuo : ylkuo@virginia.edu

Submission help

Yan Zhuang : yyx3hd@virginia.edu